I haven’t done one of these in a while, but recently I saw an excellent example of what I like to call “Praiseworthy Wrongness”, or someone willing to publicly admit they have erred due to their own bias. I like to highlight these because while in a perfect world no one would make a mistake, the best thing you can do upon realizing you have made one is to own up to it.

Today’s case is from a research Keith Stanovich, who created something called the Active Open-Thinking Questionnaire back in the 90s. In their words, this questionnaire was supposed to measure “a thinking disposition encompassing the cultivation of reflectiveness rather than impulsivity; the desire to act for good reasons; tolerance for ambiguity combined with a willingness to postpone closure; and the seeking and processing of information that disconfirms one’s beliefs (as opposed to confirmation bias in evidence seeking).”

For almost 20 years this questionnaire has been in use in numerous psychological studies, but recently Stanovich became trouble when he noted that several studies showed that being religious had a very strong negative correlation (-.5 to -.7) with open-minded thinking. While one conclusion you could draw from this is that religious people are very closed minded, Stanovich realized he had never intended to make any statement about religion. This correlation was extremely strong for a psychological finding, and the magnitude concerned him. He also got worried as he realized that neither he nor anyone else in his lab were religious. Had they unintentionally introduced questions that were biased against religious people? In his new paper “The need for intellectual diversity in psychological science: Our own studies of actively open-minded thinking as a case study“, he decided to take a look.

In looking back over the questions, he realized that the biggest difference seemed to be appearing in questions addressing the tendency towards”belief revision”. These questions were things like “People should always take into consideration evidence that goes against their beliefs”, and he realized they would probably be read differently by religious vs non-religious people. A religious person would almost certainly read that statement and jump immediately to their core values: belief in God, belief in morality, etc etc. In other words, they’d be thinking of moral or spiritual beliefs. A secular person might have a different interpretation, like their belief in global warming or their belief in H Pylori causing stomach ulcers….their factual beliefs. It would therefore be unsurprising that religious people would be less likely to answer “sure I’ll change my mind ” than secular ones.

To test this, they decided to make the questions more explicit. Rather than ask generic questions, they decided to create a secular and a religious version of each question as well. The modifications are shown here:

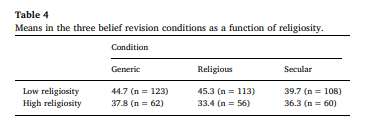

They then randomly assigned groups of people to take a test with each version of the questions, along with identifying how religious they were. The results confirmed his suspicions:

When non-religious people were given generic questions, they scored higher on open-mindedness than highly religious people. When the questions cited religious examples, they continued to score as open minded. However, when the questions changed to specific secular examples, such as justice and equality, their scores dropped. Religious people showed the reverse, however their drop with religious questions wasn’t quite as profound. Overall, the negative correlation with religion still remained, but it got much smaller under the new conditions.

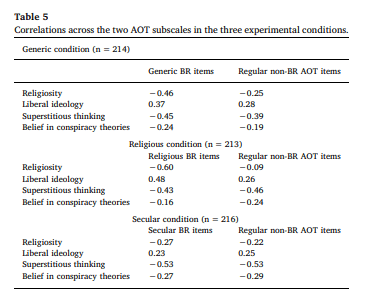

So basically the format of the belief revision questions resulted in a correlation as high as -.60 for specifically religious questions, down to -.27 for secular questions. The -.27 was more in line with the score for other types of questions on the test (-.22), so they recommended that secular versions be used.

At the end of the paper, things get really interesting. The authors go meta and start to ask why it took them 20 years to figure out this set of questions was biased. A few issues they raise:

- They believed they had written a neutral set of questions, and had never considered that the word “belief” could be interpreted differently by different groups of people

- They missed #1 “because not a single member of our lab had any religious inclinations at all.”

- They correlations fit their biases, so they didn’t question them. They admitted that if religion had ended up positively correlating with open mindedness, they would probably have re-written the test.

- They believe this is a warning both against having a non-diverse staff and believing in effect sizes that are unrealistically big. While they reiterate that the negative correlation does exist, they admit that they should have been suspicious about how strong it was.

Now whatever you think of the original error, it is damn impressive to me for someone to come forward and publicly say 20 years worth of their work may not be totally valid and to attempt to make amends. Questionnaires and validated scales are used widely in psychology, so the possible errors here go beyond just the work of this one researcher. Good on them for coming forward, and doing research to actually contradict themselves. The world needs more of this.