I got in an interesting discussion this weekend with some folks about internet trolls. One person had made an offhanded comment about “anonymity bringing out the worst in people”, and was surprised when I informed them that the current research didn’t really support them in that. Depending on your perspective I am either the best or worst person to ever get in one of these discussions with, because I decided to do a little roundup of the current research on internet trolls. Hang in there with me, and you get a bonus SHEEPLE at the end:

- Defining trolling is actually kind of hard While most of us would say that trolling is a sort of “you know it when you see it” issue, the definitions used actually vary a bit. For example this study found trolls by asking participants directly “do you like to troll”, this study just counted Tweets that contained “bad words”, this study had researchers read through individual posts and had rank their “trollishness”, and this study had researchers track whole comment histories of banned forum users. None of those are necessarily wrong, but they all will catch slightly different sets of behavior and groups of commenters.

- Trolls who cause chaos online also like to cause chaos for researchers. To the surprise of no one, those who admit they like to troll online also get a kick out of messing with researchers. When Whitney Phillips was writing her book about trolling, she tried to interview self professed trolls to see what motivated them. Unfortunately they kept making up stories then hanging up on her. That makes you wonder about research where people had to self define as trolls, like this widely reported study that said trolls tend to be sadists. Are trolls really sadists, or do those who say “yes I like to troll” also like to answer “yes” to questions on sadism quizzes? And is answering “yes” as a joke substantively different from answering “yes” in all seriousness?

- Who gets targeted is a complicated question One of the issues that arises due to the different definitions of trolls (see #1) is the question of “who gets targeted”. At this point “trolling” can be used to define anything from irritating but benign behavior to criticism of all types to abuse, threats and harassment. With so many varieties of trolling, figuring out who the targets are can be more difficult than it first appears. For example, this British marketing group found that male celebrities got twice the Twitter harassment as female celebrities. To note, the standard for “harassment” used there was a “bad word” filter, and the number or content of the celebrities Tweets were not rated. Given that Piers Morgan, Ricky Gervais, and Katie Hopkins ended up as the top three receivers of abuse, content appears to matter. Anyway, Cathy Young has this to say about the gender breakdown and Amanda Hess replied with this. We do know that young people (18-24) are the most likely to have problems, and there is a gender difference in type of harassment. From Pew Research:

This is all age ranges:

This is all age ranges:  Note: all of those terms were self defined and self reported, and there was no controlling for where those things occurred. In other words, people being called offensive names out of the blue in an innocuous situation were counted the same as someone calling you a name in the middle of a heated debate.

Note: all of those terms were self defined and self reported, and there was no controlling for where those things occurred. In other words, people being called offensive names out of the blue in an innocuous situation were counted the same as someone calling you a name in the middle of a heated debate. - Real names don’t necessarily help. Nearly as long as trolls have been discussed, people have been mentioning the enabling role of anonymity. A recent study suggests that may be less important than we think. A recent study of German social media showed that using real names frequently made people more hostile, not less. It turns out that the social signaling/credibility gained from online posts actually can empower people to get meaner. Oh boy.

- Controlling your own emotions might actually help The most common advice dispensed on this whole topic is of course “don’t feed the trolls”. However, it can be a little tough figuring out what that means. When these researchers tried to create a predictive algorithm to see if they could identify trolls by their first ten posts on a site, they discovered that trolls tend to escalate when they have posts unfairly deleted. In order to find “unfair” deletions, they blinded an assessor to the identity of the poster, and asked them if it was offensive or not. It turns out that trolls really were more likely to have inoffensive posts deleted, and that their postings worsened significantly after that happened. Now of course this may have been the goal….moderators who are sick of someones posts entirely may get capricious with the hopes that they’ll get so mad they’ll leave, but it is an interesting insight. Also interesting from the paper: trolls comment more often but in fewer threads, they have worse overall writing quality, and they get more responses than other users. Yup, designed to irritate.

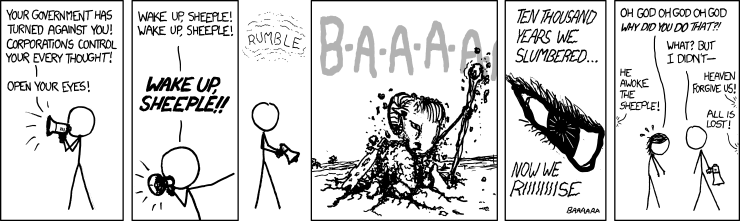

Unfortunately none of my research turned up any guidance on how likely this is to happen:

Stay safe out there.