I am fascinated by personality testing. Myers-Briggs, Big 5, Enneagram, Buzzfeed quiz, yes please. I’ll take them. There’s something about assigning humanity to little boxes that just, I don’t know, appeals to me. Maybe that’s the ENTJ in me, or my moderate conscientiousness, or the fact that according to this quiz I’m a sea monster. Given this, I realized it was high time I did a bit of a research roundup on some of the better known facets of personality testing. This week I’m taking on introverts, and if all goes well next week will be extroverts.

A few things up front: first, on introvert/extrovert scales, I score right in the middle. This makes me one of the dreaded “ambiverts” who apparently can’t make up their minds. Second, while the definition of introvert is sometimes a little lacking (see point #1 below) it’s generally defined as someone who gets their energy from being alone. With the rise of the internet, introverts started kind of having a moment, and there’s been a rash of books/memes/Buzzfeed lists about how unappreciated they all are. So what’s going on here, and what does the research say?

- Introversion doesn’t always have a definition One of the first rather odd things I learned about introverts is that the most commonly used academic definition is….”not an extrovert”. For example, in the Big Five Personality scale “introversion” is not technically a trait but “low extraversion” is. This may not seem like a big deal, but it can mean that we are lumping different things under “introvert” that may not necessarily be similar to one another. As introversion has become more trendy, I have seen more and more people lump normal social or physical limitations under “introversion”. For example, a rather extroverted friend of mine recently announced she was pretty sure she was actually an introvert. When asked why she thought this, she mentioned that she had been out 3 different nights the week before and that by the weekend she had been too exhausted to go to another party. When I inquired if maybe this was simply lack of sleep, she responded “but extroverts get their energy from people, so I should have been fine!”. No. People get their energy from rest. Almost no one can substitute human interaction for sleep too often and feel good about it. Wanting to sleep isn’t “introverted” merely because you’re not socializing while you do it.

- There may be 4 types of introversion When psychologists started actually looking in to introversion, they developed a theory that there may actually be 4 types of behavior we’ve been lumping under “introvert”: social introversion, thinking introversion, anxious introversion and restrained introversion. This was a helpful list for me, as I am moderately socially introverted (I prefer small groups), highly introverted in my thinking, but I have very little social anxiety and I’m not very restrained. Thus it makes sense that I strongly resonate with some descriptions of introversion and not others. The social anxiety piece can also be important to recognize as a separate category. I have a few friends who thought they were introverted when they were in high school only to discover that they really just didn’t like their classmates. While most introverts fight the perception that introversion = shyness, it’s probably good to note that most shy or socially anxious people will end up self selecting as introverts.

- Stimulation matters The four categories mentioned in #2 are still in the research phase, but there are other ways of looking at introversion as well. Some of the very first literature on introversion (and extroversion) actually defined it as an aversion to (or need for) extra environmental stimulation. I like this framing a bit better than the social framing, because it includes things like loud noises or fast music or why coffee only helps extroverts (basically it increases your sensitivity to stimulation, which is the last thing most introverts need when they’re trying to get things done). This explains why I’ve occasionally had introverted coworkers complain that I talked to much, even when I was studiously avoiding talking to them, or why an introvert I mentioned this to always has to tell her (extrovert) husband to shut the TV off. Social situations may not be taxing because of social issues, but rather just the stimulation of hearing so many people talk.

- This can lead to some judginess With all the recent attention on introverts in the workplace, it’s interesting to note that there’s some evidence that introverts actually judge extroverts more harshly than the other way around. In some studies done by Florida State University, they found introverted MBA students were more likely to give low marks to extroverted students, recommended they get lower bonuses, and declined to recommend them for promotions. This was true even when they manufactured the scenarios and controlled for performance. The extroverts in the study awarded bonuses/promotions/high marks much more in line with actual performance on the tasks and did not penalize introverts. The researchers hypothesize that due to the stimulation issue (#3) introverts may just have a harder time working with extroverts regardless of their competence. I also have to wonder if there’s a bit of the Tim Tebow Fallacy going on here….with all the press about how extroverts do better in business, many introverts (especially in MBA programs, as these research subjects were) could feel that by marking extroverts down they are balancing the scales a bit. We don’t know how this works in the general population, but it is worth keeping in mind.

- Introverts may (wrongly) think they’re the minority There’s a bit of confusion over what percentage of the population is introverted….which is not particularly surprising when you consider the weird definitions we considered in #1-#3. At this point though, most estimates put it at about 50% (unless you consider “ambivert” a category). So why do introverts tend to feel outnumbered? Well, it’s a statistical quirk called the majority illusion. Basically, because extroverts are more likely to have lots of friends, people are more likely to be friends with lots of extroverts. This artificially skews the perception of the numbers, and leaves people with the impression that they know more extroverts because there are more extroverts. So introverts, take heart. There are more of you out there than you think.

Come back next week and we’ll take a look at extroverts!

And here is

And here is

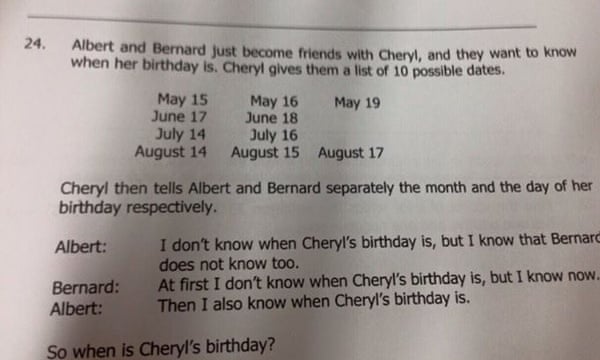

This is all age ranges:

This is all age ranges:  Note: all of those terms were self defined and self reported, and there was no controlling for where those things occurred. In other words, people being called offensive names out of the blue in an innocuous situation were counted the same as someone calling you a name in the middle of a heated debate.

Note: all of those terms were self defined and self reported, and there was no controlling for where those things occurred. In other words, people being called offensive names out of the blue in an innocuous situation were counted the same as someone calling you a name in the middle of a heated debate.