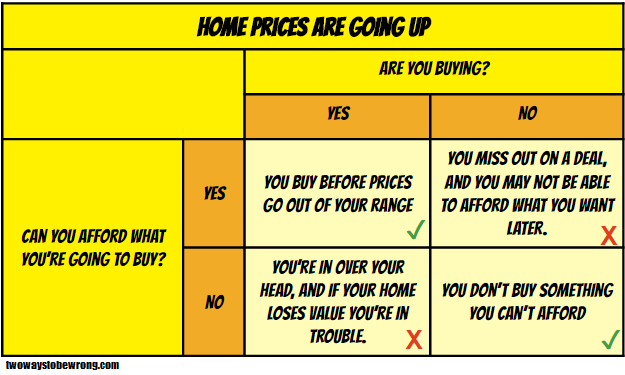

I’ve often repeated on this blog that there are really two ways to be wrong. I bring it up so often because it’s important to remember that being right does not always mean preventing error, but at times requires us to consider how we would prefer to err.

I bring all this up because I had to make a very tough decision this past Saturday, and it all started with Batman.

It was 3 am or so when I heard my 4 year old son crying. This wasn’t terribly unusual…between nightmares or other middle of the night issues this happens just about every other week. I went out in the hall to see what was happening, and I found him crying hysterically. I picked him up and asked him what was wrong, noticing that he seemed particularly upset and very red. “Mama, I swallowed Batman and he’s stuck in my throat and I can’t get him out” he wailed. My heart shot to my throat. He had a small Batman action figure he had taken to bed with him. I had thought it was too big to swallow, and he was a little old for swallowing toys….but in his sleep I had no idea what he could have done. Before I could even look in his mouth he started making a horrible coughing/choking sound l’d never heard before and was gasping for air through the tears. I looked in his mouth and saw nothing, but thought I felt something.

I woke my husband up, and we briefly debated what to do. Our son was still breathing, but he sounded horrible. I was unsure what, if anything was in his throat. I had never called 911 to my own house before, and I ran down the other options. Call the pediatrician? They could take an hour to call back. Drive to the ER? What if something happened in the middle of the highway? Call my mother? She couldn’t do much over the phone. Google? Seriously? Does “Google hypochondriac” have an antonym that means “person googling something that’s way to important for Google”?

Realizing I had no way of getting a better read on the situation and with my son still horrifically coughing and gasping in the background, I took a deep breath and thought about being wrong. Would I rather risk calling 911 unnecessarily, or risk my child starting to fully choke on an object that might be a funny shape and tough get out with the Hemleich manuever? Phrased that way, the answer was immediately clear. I made the call. The whole train of thought plus discussion with my husband took less than two minutes.

The police and EMTs arrive a few minutes later. My son had started to calm down, and they were great with him. They examined his mouth and throat, and were relatively sure there was nothing in the airway. They found the Batman toy still in his bed. Knowing that his breathing was safe, we drove to the ER ourselves to make sure he hadn’t swallowed anything that was now in his stomach, and that his throat hadn’t gotten irritated or reactive. He still had the horrible sounding cough. He brought Batman with him.

In the end, there was nothing in his stomach. He had spasmodic croup (first time he’s had croup at all), and the doctor thinks that his “I swallowed Batman” statement was his way of trying to explain to us that he woke up with either a spasm or painful mucus blockage in his throat. The crying had made it worse, which was why he sounded so bad when I went to him. While we were there he picked up Batman, pointed to the tiny cloth cape and said “see, that’s what was in my throat!”. We got some steroids to calm his throat down, and we were on our way home. We all went back to bed.

In the end, I was wrong. I didn’t really need to call 911, and we could have just driven to the hospital ourselves. We needed the stomach x-ray for reassurance and the steroids so he could get some sleep, but there was no emergency. But I tell this whole story because this is where examining up front the preferred way of being wrong comes in handy: I had already acknowledged that being wrong in this way was something I could live with. My decision making rested in part on being wrong in the right direction. I can live with an unnecessary call. I couldn’t have lived with the alternative way of being wrong.

Written out here, this seems so simplistic. However in a (potential) emergency, the choices that go in to each box can vary the calculation wildly.

- Can you get more information to increase your chances of being right? (I couldn’t, it was 3 in the morning)

- How soon will the consequences occur if you’re wrong? (Choking is a minutes and seconds issue)

- How prepared are you to deal with the worst outcome? (I know the Heimlich, but have never done it on a child and was worried that an oddly shaped object might make it difficult)

- How severe are the consequences? (Don’t even want to think about this one)

That’s a lot to think about in the middle of the night, but I was glad I had the general mental model on hand. I think it helped save some extra panic, and if I had it to do over again I’d make the same decision.

As for my son, the next morning he informed me that from now on “I’m going to keep my coughs in my mouth. They scare mama.” Someone clearly needs his own contingency matrix.