This is a series of posts featuring anecdotes from the book The Signal and the Noise by Nate Silver. Read all the Signal and the Noise Posts here, or go back the Chapter 2 post here.

Baseball talk. The stats guys vs scouts debates are my favorite.

This is a series of posts featuring anecdotes from the book The Signal and the Noise by Nate Silver. Read all the Signal and the Noise Posts here, or go back the Chapter 2 post here.

Baseball talk. The stats guys vs scouts debates are my favorite.

I’ve often repeated on this blog that there are really two ways to be wrong. I bring it up so often because it’s important to remember that being right does not always mean preventing error, but at times requires us to consider how we would prefer to err.

I bring all this up because I had to make a very tough decision this past Saturday, and it all started with Batman.

It was 3 am or so when I heard my 4 year old son crying. This wasn’t terribly unusual…between nightmares or other middle of the night issues this happens just about every other week. I went out in the hall to see what was happening, and I found him crying hysterically. I picked him up and asked him what was wrong, noticing that he seemed particularly upset and very red. “Mama, I swallowed Batman and he’s stuck in my throat and I can’t get him out” he wailed. My heart shot to my throat. He had a small Batman action figure he had taken to bed with him. I had thought it was too big to swallow, and he was a little old for swallowing toys….but in his sleep I had no idea what he could have done. Before I could even look in his mouth he started making a horrible coughing/choking sound l’d never heard before and was gasping for air through the tears. I looked in his mouth and saw nothing, but thought I felt something.

I woke my husband up, and we briefly debated what to do. Our son was still breathing, but he sounded horrible. I was unsure what, if anything was in his throat. I had never called 911 to my own house before, and I ran down the other options. Call the pediatrician? They could take an hour to call back. Drive to the ER? What if something happened in the middle of the highway? Call my mother? She couldn’t do much over the phone. Google? Seriously? Does “Google hypochondriac” have an antonym that means “person googling something that’s way to important for Google”?

Realizing I had no way of getting a better read on the situation and with my son still horrifically coughing and gasping in the background, I took a deep breath and thought about being wrong. Would I rather risk calling 911 unnecessarily, or risk my child starting to fully choke on an object that might be a funny shape and tough get out with the Hemleich manuever? Phrased that way, the answer was immediately clear. I made the call. The whole train of thought plus discussion with my husband took less than two minutes.

The police and EMTs arrive a few minutes later. My son had started to calm down, and they were great with him. They examined his mouth and throat, and were relatively sure there was nothing in the airway. They found the Batman toy still in his bed. Knowing that his breathing was safe, we drove to the ER ourselves to make sure he hadn’t swallowed anything that was now in his stomach, and that his throat hadn’t gotten irritated or reactive. He still had the horrible sounding cough. He brought Batman with him.

In the end, there was nothing in his stomach. He had spasmodic croup (first time he’s had croup at all), and the doctor thinks that his “I swallowed Batman” statement was his way of trying to explain to us that he woke up with either a spasm or painful mucus blockage in his throat. The crying had made it worse, which was why he sounded so bad when I went to him. While we were there he picked up Batman, pointed to the tiny cloth cape and said “see, that’s what was in my throat!”. We got some steroids to calm his throat down, and we were on our way home. We all went back to bed.

In the end, I was wrong. I didn’t really need to call 911, and we could have just driven to the hospital ourselves. We needed the stomach x-ray for reassurance and the steroids so he could get some sleep, but there was no emergency. But I tell this whole story because this is where examining up front the preferred way of being wrong comes in handy: I had already acknowledged that being wrong in this way was something I could live with. My decision making rested in part on being wrong in the right direction. I can live with an unnecessary call. I couldn’t have lived with the alternative way of being wrong.

Written out here, this seems so simplistic. However in a (potential) emergency, the choices that go in to each box can vary the calculation wildly.

That’s a lot to think about in the middle of the night, but I was glad I had the general mental model on hand. I think it helped save some extra panic, and if I had it to do over again I’d make the same decision.

As for my son, the next morning he informed me that from now on “I’m going to keep my coughs in my mouth. They scare mama.” Someone clearly needs his own contingency matrix.

This is a series of posts featuring anecdotes from the book The Signal and the Noise by Nate Silver. Read the Chapter 1 post here.

Chapter 2 of The Signal and the Noise focuses on why political pundits are so often wrong. When TV channels select for those making crazy predictions, it turns out accuracy rates go way down. You can either get bold, or you can be right, but very rarely can you be both.

Basically, networks don’t care about false positives….big predictions that don’t come true. What they do care about is false negatives….possibilities that don’t get raised. They consider the first just understandable bluster, but the second is unforgivable. So next time you wonder why there’s so many stupid opinions on TV, remember that’s a feature not a bug.

Read all The Signal and the Noise posts here, or go back to Chapter 1 here.

I’ve been reading Nate Silver’s “The Signal and the Noise” recently, and pretty much every chapter seems to lend itself to a contingency matrix. Each chapter is focused around a different prediction issue, and Chapter 1 is around the housing bubble and the incorrect valuation of the CDO market.

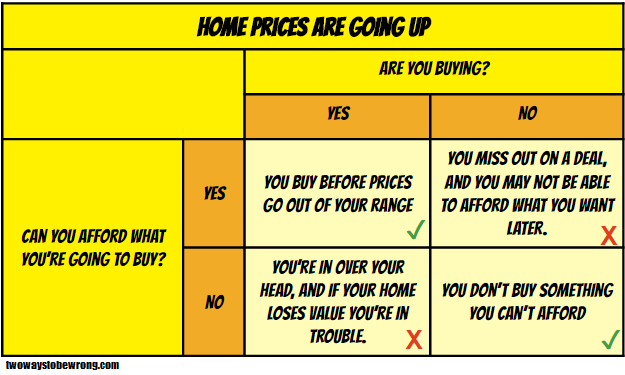

I wasn’t going to get in to CDO ratings, but here’s the housing bubble:

It should be noted that swapping the word “home” in the title for any other product describes pretty much every market bubble ever. Color scheme taken from the cover art of the hardcover version, or maybe the Simpsons.

See all The Signal and the Noise posts here, or go to Chapter 2 here.

In my post from Sunday, I talked about base rates and how police investigative techniques can go wrong. I specifically focused on testing methods for drug residue, which are not always as accurate as you might hope.

On an interestingly related note, today I was reading the chapter on logarithms from “In Pursuit of the Unknown: 17 Equations That Changed the World” (one of my math books I’m reading this year). It was discussing John Napier, a Scottish mathematician who invented logarithms in the early 1600s. Napier was an interesting guy….friend of Tycho Brahe, brilliant mathematician, and possible believer in the occult. For reasons possibly having to do with one of those last two, he apparently carried a black cockerel (rooster) around with him a lot.

It’s actually not clear if he really was involved in the occult, but he did tell everyone he had a magic rooster. He used it to catch thieves. Here’s what his strategy was:

No idea what the base rate was here, or if it would hold up in court today….but maybe something to consider if the budget gets cut.

(Special thanks to the Shakespeare translator for helping me out a bit on this one).

After my last post on the two different ways of being wrong, the Assistant Village Idiot brought up the dwarves from the book “The Last Battle” from the Chronicles of Narnia series. I was curious what the contingency matrix for that book would look like. I haven’t read it in a while, but I quickly realized there were actually three pretty distinct ways of being wrong in that book. As far as I can tell, the matrix looks like this:

You’re welcome.

One of the most interesting things I’ve gotten to do since I started blogging about data/stats/science is to go to high school classrooms and share some of what I’ve learned. I started with my brother’s Environmental Science class a few years ago, and that has expanded to include other classes at his school and some other classes elsewhere. I often get more out of these talks than the kids do…something about the questions and immediate feedback really pushes me to think about how I present things.

Given that, I was intrigued by a call I got from my brother yesterday. We were talking a bit about science and skepticism, and he mentioned that as the year wound down he was having to walk back on some of what I presented to his class at the beginning of the year. The problem, he said, was not that the kids had failed to grasp the message of skepticism…but rather that they had grasped it too well. He had spent the year attempting to get kids to think critically, and was now hearing his kids essentially claim it was impossible to know anything because everything could be manipulated.

Oops.

I was thinking about this after we hung up, and how important it is not to leave the impression that there’s only one way to be wrong. In most situations that need a judgment call, there’s actually two ways to be wrong. Stats and medicine have a really interesting tool for showing this phenomena: a 2×2 contingency matrix . Basically, you take two different conditions and sort how often they agree or disagree and under what circumstances those happen.

For example, for my brother’s class, this is the contingency matrix:

In terms of outcomes, we have 4 options:

Of those four options, #2 and #3 are the two we want to avoid. In those cases the reality (true or not) clashes with the test (in this case our assessment of the truth). In my talk and my brother’s later lessons, we focused on eliminating #3. One way of doing this is to be more discerning with what we believe or we don’t, but many people can leave with the impression that disbelieving everything is the way to go. While that will absolutely reduce the number of false positive beliefs, it will also increase the number of false negatives. Now, depending on the field this may not be a bad thing, but overall it’s just substituting one lack of thought for another. What’s trickier is to stay open to evidence while also being skeptical.

It’s probably worth mentioning that not everyone gets into these categories honestly…some people believe a true thing pretty much by accident or fail to believe a false thing for bad reasons. Every field has an example of someone who accidentally ended up on the right side of history. There also aren’t always just two possibilities, many scientific theories have shades of gray.

Caveats aside, it’s important to at least raise the possibility that not all errors are the same. Most of us have a bias towards one error or another, and will exhort others to avoid one at the expense of the other. However, for both our own sense of humility and the full education of others, it’s probably worth keeping an eye on the other way of being wrong.