Chapter 7 was about extrapolation and predictions that influence their own accuracy. One of my favorite examples was predictions about disease spread:

5 Things You Should Know About the Great Flossing Debate of 2016

I got an interesting reader question a few days ago, in the form of a rather perplexed/angry/tentatively excited message asking if he could stop flossing. The asker (who shall remain nameless) was reacting to a story from the Associated Press called “The Medical Benefits of Dental Floss Unproven“. In it, the AP tells their tale of trying to find out why the government was recommending daily flossing, given that it appeared there was no evidence to support the practice. They filed a Freedom of Information Act request, and not only did they never receive any evidence, but they later discovered the Department of Health and Human Services had dropped the recommendation. The reason? The effectiveness had never been studied. Oops.

So what do you need to know about this controversy? Is it okay to stop flossing? Here’s 5 things to help you make up your mind:

- The controversy isn’t new. While the AP story seems to have brought this issue in the public eye, it’s interesting to note that people have tried to call attention to this issue for a few years now. The article I linked to is from 2013, and it cites research from the last decade attempting to figure out if flossing actually works or not. While flossing has been recommended by dentists since about 1902 and by the US government since the 1970s, it has not gone unnoticed that it’s never been studied.

- The current studies are a bit of a mess. Okay, so if everyone kinda knew this was a problem, why hasn’t it been resolved? Well it turns out it’s actually really freaking difficult to resolve something like this. The problem is two-fold: people hate flossing and flossing is hard to do correctly. Some studies have had people get flossed by a hygienist every day, and those folks had fewer cavities. However, when the same study looked at people who had been trained to floss themselves, they found no difference between them and those who didn’t floss. Many other studies found only tiny effects, and a meta-analysis concluded that there was no real evidence it prevented gingivitis or plaque build up. Does this require more time investment? Better technique? Or is it just that conscientious people who brush are pretty much okay either way? We don’t actually know….thus the controversy.

- Absence of evidence isn’t evidence of absence. All that being said, it’s important to note that no one is saying flossing is bad for you. At worst it may be useless, or at least useless the way most of us actually do it. However, most dentists agree that you need to do something to remove bacteria and plaque from between your teeth, and that shouldn’t be taken lightly. It’s absolutely great for people to call out the American Dental Association and the Department of Health and Human Services for recommendations without evidence, but we shouldn’t make the mistake of believing that this proves flossing is useless. That assertion also has no evidence.

- Don’t underestimate the Catch-22 of research ethics. Okay, so now that everyone’s aware of this, we can do a really great rigorous study on this right? Well…maybe not. Clinical trial research ethics dictate that research should have a favorable cost benefit ratio for participants. Since every major dental organization endorses flossing, they’d have to knowingly ask some participants to do something they actually thought was damaging to them. That would be extremely tough to get by an Institutional Review Board for more than a few months. This leaves observational studies, which of course are notorious for being unable to settle correlation/causation issues and probably won’t end the debate. Additionally, some dentists commenting are concerned about how many of the limited research dollars available should be spent on proving something they already believe to be true. None of these are easy questions to answer.

- There may not be a precise answer. As with many health behaviors, it’s important to remember that flossing isn’t limited to a binary yes/no. It may turn out that flossing twice a week is just as effective as flossing every day, or it may turn out they’re dramatically different. There’s some evidence that using mouthwash every day may actually be more effective than flossing, but would some of each be even better or the same? Despite the lack of evidence for the “daily” recommendation, I do think it’s worth listening to your dentist on this one and at least attempting to keep it in your routine. Unlike oh, say, the supplement industry, I’m not really sure “Big Floss” is making a lot of money on the whole thing. On the other hand, it doesn’t appear anyone should feel bad for missing a few days, especially if you use mouthwash regularly.

So after reviewing the controversy, I have to say I will probably keep flossing daily. Or rather, I’ll keep aiming to floss daily because that has literally never translated in to more than 3 times/week. I will probably increase my use of mouthwash based on this study, but that’s something I was meaning to do anyway. Whether it causes a behavior change or not though, we should all be happy with a push for more evidence.

Men, Masculinity Threats and Voting in 2016

Back in February I did a post called Women, Ovulation and Voting in 2016, about various researchers attempts to prove or disprove a link between menstrual cycles and their voting preferences. As part of that critique, I had brought up a point that Andrew Gelman made about the inherently dubious nature of anyone claiming to find a 20+ point swing in voting preference. People just don’t tend to vary their party preference that much over anything, so they claim on it’s face is suspect.

I was thinking of that this week when I saw a link to this HBR article from back in April that sort of gender-flips the ovulation study. In this research (done in March), they asked men whether they would vote for Trump or Clinton if the election were today. For half of the men they first asked them a question about how much their wives made in comparison to them. For the other half, they got that question after they’d stated their political preference. The question was intended to be a “gender prime” to get men thinking about gender and present a threat to their sense of masculinity. Their results showed that men who had to think about gender roles prior to answering political preference showed a 24 point shift in voting patterns. The “unprimed” men (who were asked about income after they were asked about political preference) had preferred Clinton by 16 points, and the “primed” men preferred Trump by 8 points. If the question was changed to Sanders vs Trump, the priming didn’t change the gap at all. For women, being “gender primed” actually increased support for Clinton and decreased support for Trump.

Now given my stated skepticism of 20+ point swing claims, I decided to check out what happened here. The full results of the poll are here, and when I took a look at the data there was one thing that really jumped out at me: a large percent of the increased support for Trump came from people switching from “undecided/refuse to answer/don’t know” to “Trump”. Check it out, and keep in mind the margin of error is +/-3.9:

So basically men who were primed were more likely to give an answer (and that answer was Trump) and women who were primed were less like to answer at all. For the Sanders vs Trump numbers, that held true for men as well:

In both cases there was about a 10% swing in men who wouldn’t answer the question when they were asked candidate preference first, but would answer the question if they were “primed” first. Given the margin of error was +/-3.9 overall, this swing seems to be the critical factor to focus on…..yet it was not mentioned in the original article. One could argue that hearing about gender roles made men get more opinionated, but isn’t it also plausible the order of the questions caused a subtle selection bias? We don’t know how many men hung up on the pollster after being asked about their income with respect to their wives, or if that question incentivized other men to stay on the line. It’s interesting to note that men who were asked about their income first were more likely to say they outearned their wives, and less likely to say they earned “about the same” as them…..which I think at least suggests a bit of selection bias.

As I’ve discussed previously, selection bias can be a big a big deal…and political polls are particularly susceptible to it. I mentioned Andrew Gelman previously, and he had a great article this week about his research on “systemic non-response” in political polling. He took a look at overall polling swings, and used various methods to see if he could differentiate between changes in candidate perception and changes in who picked up the phone. His data suggests that about 66-85% of polling swings are actually due to a change in the number of Republicans and Democrats who are willing to answer pollsters questions as opposed to a real change in perception. This includes widely reported on phenomena such as “post convention bounce” or “post debate effects”. This doesn’t mean the effects studied in these polls (or the studies I covered above) don’t exist at all, but that they may be an order of magnitude more subtle than suggested.

So whether you’re talking about ovulation or threats to male ego, I think it’s important to remember that who answers is just as important as what they answer. In this case 692 people were being used to represent the 5.27 million New Jersey voters, so any the potential for bias is, well, gonna be yuuuuuuuuuuuuuuuuuuge.

The Signal and the Noise: Chapter 6

I’ve been going through the book The Signal and the Noise, and pulling out some of the anecdotes in to contingency matrices. Chapter 6 covers margin of error and communicating uncertainty.

There’s a great anecdote in the opening of this chapter about flood heights and margin of error. If your levee is only built to contain 51 feet of water, then you REALLY need to know that the weather service prediction is 49 feet +/- 9, not just 49 feet.

This is bad enough, but Silver also points out that we almost never get a margin of error or uncertainty for economic predictions. This is probably why they’re all terrible, especially if they come from a politically affiliated group.

The lesson here is knowing what you don’t know is sometimes more important than knowing what you do know.

What I’m Reading: August 2016

This month my stats book is Teaching Statistics: A Bag of Tricks by Andrew Gelman and Deborah Nolan. I’m part way through it, but it’s really good. If you’ve ever had to explain statistical concepts to a group of uninterested people, this is GREAT.

Recently someone on Facebook mentioned that they were surprised that increased knowledge of unethical politician behavior seems to change the mind of absolutely no one. Turns out it may be even worse than that….there’s evidence that informing people of your potential conflicts of interest makes them more likely to follow your recommendations.

Somewhat related to that, I’m still chewing on this piece from the Atlantic about how our political process went insane. It seems over hyped to me, but if even a quarter of it’s real we should probably be nervous.

This New York Times story about a skinny woman with all of the markers of obesity was one of the more fascinating health stories I read this month.

Another good NYTs story about bad concussion data the NFL has been using. Apparently the group who did the study gave them the preliminary results, but never told them that the final results actually didn’t bear out the initial findings.

Speaking of initial data, I was bummed to hear that the reports of the Ice Bucket Challenge leading to a major ALS breakthrough are probably jumping the gun.

The six types of peer reviewers made me laugh more than a little.

Okay, some of these experiments aren’t quite as straightforward as presented here (see the Stanford Prison Experiment), but this was a really weird list.

Selection Bias: The Bad, The Ugly and the Surprisingly Useful

Selection bias and sampling theory are two of the most unappreciated issues in the popular consumption of statistics. While they present challenges for nearly every study ever done, they are often seen as boring….until something goes wrong. I was thinking about this recently because I was in a meeting on Friday and heard an absolutely stellar example of someone using selection bias quite cleverly to combat a tricky problem. I get to that story towards the bottom of the post, but first I wanted to go over some basics.

First, a quick reminder of why we sample: we are almost always unable to ask the entire population of people how they feel about something. We therefore have to find a way of getting a subset to tell us what we want to know, but for that to be valid that subset has to look like the main population we’re interested in. Selection bias happens when that process goes wrong. How can this go wrong? Glad you asked! Here’s 5 ways:

- You asked a non-representative group Finding a truly “random sample” of people is hard. Like really hard. It takes time and money, and almost every researcher is short on both. The most common example of this is probably our personal lives. We talk to everyone around us about a particular issue, and discover that everyone we know feels the same way we do. Depending on the scope of the issue, this can give us a very flawed view of what the “general” opinion is. It sounds silly and obvious, but if you remember that many psychological studies rely exclusively on W.E.I.R.D. college students for their results, it becomes a little more alarming. Even if you figure out how to get in touch with a pretty representative sample, it’s worth noting that what works today may not work tomorrow. For example, political polling took a huge hit after the introduction of cell phones. As young people moved away from landlines, polls that relied on them got less and less accurate. The selection method stayed the same, it was the people that changed.

- A non-representative group answered Okay, so you figured out how to get in touch with a random sample. Yay! This means good results, right? No, sadly. The next issue we encounter is when your respondents mess with your results by opting in or opting out of answering in ways that are not random. This is non-response bias, and basically it means “the group that answered is different from the group that didn’t answer”. This can happen in public opinion polls (people with strong feelings tend to answer more often than those who feel more neutrally) or even by people dropping out of research studies(our diet worked great for the 5 out of 20 people who actually stuck with it!). For health and nutrition surveys, people also may answer based on how good they feel about their response, or how interested they are in the topic. This study from the Netherlands,for example, found that people who drink excessively or abstain entirely are much less likely to answer surveys about alcohol use than those who drink moderately. There’s some really interesting ways to correct for this, but it’s a chronic problem for people who try to figure out public opinion.

- You unintentionally double counted This example comes from the book Teaching Statistics by Gelman and Nolan. Imagine that you wanted to find out the average family size in your school district. You randomly select a whole bunch of kids and ask them how many siblings they have, then average the results. Sounds good, right? Well, maybe not. That strategy will almost certainly end up overestimating the average number of siblings, because large families are by definition going to have a better chance of being picked in any sample. Now this can seem obvious when you’re talking explicitly about family size, but what if it’s just one factor out of many? If you heard “a recent study showed kids with more siblings get better grades than those without” you’d have to go pretty far in to the methodology section before you might realize that some families may have been double (or triple, or quadruple) counted.

- The group you are looking at self selected before you got there Okay, so now that you understand sampling bias, try mixing it with correlation and causation confusion. Even if you ask a random group and get responses from everyone, you can still end up with discrepancies between groups because of sorting that happened before you got there. For example, a few years ago there was a Pew Research survey that showed that 4 out of 10 households had female breadwinners, but that those female breadwinners earned less than male breadwinner households. However, it turned out that there were really 2 types of female breadwinner households: single moms and wives who outearned their husbands. Wives who outearned their husbands made about as much as male breadwinners, while single mothers earned substantially less. None of these groups are random, so any differences between them may have already existed.

- You can actually use all of the above to your advantage. As promised, here’s the story that spawned this whole post: Bone marrow transplant programs are fairly reliant on altruistic donors. Registries that recruit possible donors often face a “retention” problem….i.e. where people initially sign up, then never respond when they are actually needed. This is a particularly big problem with donors under the age of 25, who for medical reasons are the most desirable donors. Recently a registry we work with at my place of business told us their new recruiting tactic used to mitigate this problem. Instead of signing people up in person for the registry, they get minimal information from them up front, then send them an email with further instructions about how to finish registering. They then only sign up those people who respond to the email. This decreases the number of people who end up registering to be donors, but greatly increases the number of registered donors who later respond when they’re needed. They use selection bias to weed out those who were least likely to be responsive….aka those who didn’t respond to even one initial email. It’s a more positive version of the Nigerian scammer tactic.

Selection bias can seem obvious or simple, but since nearly every study or poll has to grapple with it, it’s always worth reviewing. I’d also be remiss if I didn’t include a link here for those ages 18 to 44 who might be interested in registering to be a potential bone marrow donor.

Probability Paper and Polling Corrections

This is another post from my grandfather’s newsletter (intro to that here). When I first mentioned his newsletter, I mentioned that he manufactured probability paper for people who needed to do advanced calculations in the days before computers. I found some cool examples while looking through the 1975 issues recently, so I thought I’d show them off here. First was this paper, used to determine what the “true” polling percentage is when you have a lot of undecided voters. He was using an equation he called Seder’s method to adjust the pollsters predictions:

To use it, you find the percent of people who responded to the survey with a definite answer as the x-axis, then look to the right to find the percentage of people who made a particular choice. Once you have that data point, you draw a line to the left (the traditional y-axis to find out how many people will probably end up going with a particular choice once they have to make one.

I decided to try it based on a recent Quinnipiac presidential election poll (from June 29th, 2016). This has Clinton polling at 39%, Trump at 37%, Johnson at 8% and Stein at 4%, with 12% answering some combination of Unknown/Undecided/Maybe Won’t Vote/Maybe someone else. Here what this would look like filled out:

As you can see, it adjusts everyone a little upward, with a little more going toward those polling with the low numbers. Whether or not this is the correct adjustment is up for debate, but it’s a fun little tool to use for those who don’t like equations.

This particular one was actually one of his easy ones. Here’s the paper for getting confidence intervals for Bernoulli probabilities:

It looks complicated, but compared to doing it by hand, this was MUCH easier. To show how much time we have on our hands now that computers do the complicated stuff, check out my take on the Bernoulli distribution here. That’s what I do while SAS is importing my files. Ah, technology.

The Signal and The Noise: Chapter 5

Apparently we’re terrible at predicting earthquakes.

That’s what Chapter 5 is about, and it makes sense. Predicting rare events (Black Swans as Taleb would call them) is terribly difficult because you may only be working with a theoretical possibility and a limited data set. Even though we can get a general sense of where earthquakes may hit, we still don’t get much data on the major ones. This map from Wired shows some interesting regional information:

So with limited data points, the tendency for predictions is going to be to take every data point seriously and risk overfitting the model. The other problem is not going far enough back with the data. In Japan prior to the Fukashima disaster, evidence that major earthquakes had hit thousands of years ago was left off the risk assessment.

My most memorable earthquake experience was actually a few weeks after my son was born. I was feeding him, and I thought a large truck had gone by. Something felt off though, and he seemed surprisingly confused by it. When I went downstairs again, I checked the news and realized that “truck” had been an earthquake.

5 Things You Should Know About the Body Mass Index

This post comes from a reader question I got asking for my opinion on the Body Mass Index (BMI). Quick intro for the unfamiliar: the BMI is a calculated value that related your height and weight. It takes your weight (in kilograms) and divides it by your height (in meters) squared. For those of you in the US, that’s weight (in pounds) times 703, divided by height (in inches) squared. Automatic calculator here. A BMI score of less than 18.5 is considered underweight, 18.5-24.9 is normal, 25-29.9 is overweight, and >30 is obese. So what’s the deal with this thing?

- It was developed for use in population health, and it’s been around longer than you might think. The BMI was invented by Adolphe Quetlet in between 1830 and 1850. He was a statistician who needed an easy way of comparing population weights that actually took height in to account. Now this makes a lot of sense….height is more strongly correlated with weight than any other variable. In fact as a species we’re about 4 inches taller than we were when the BMI was invented. Anyway, it was given the name “Body Mass Index” by Ancel Keys in 1972. Keys was conducting research on the relative obesity of different populations throughout the world, and was sorting through all the various equations that related height and weight and how they correlated with measured body fat percentage. He determined this was the best, though his comparisons did not include women, children or those over 65, or non-Caucasians.

- Being outside the normal range means more than being inside of it. So if Keys was looking for something that correlated to body fat percent, how does the BMI do? Well, a 2010 study found that the correlation is about r = .66 for men and r=.84 for women. However, the researchers also looked at it’s usefulness as a screening test….how often did it accurately sort people in to “high body fat” or “not-high body fat”? Well, for those with BMIs greater than 30, the positive predictive value is better than the negative predictive value. So basically, if you know you have a BMI over 30, you are also likely to have excess body fat (87% of men, 99% of women). However, if you have a BMI of under 30, about 40% of men and 46% of women still had excess body fat. If you move the line down to a BMI of 25, some gender differences show up: 69% of men with BMIs over 25 actually have excess body fat, compared to 90% of women. This means a full 30% of “overweight” males are actually fine. About 20% of both genders with BMIs under 25 actually have excess body fat. So basically if you’re above 30, you almost certainly have excess body fat, but being below that line doesn’t necessarily let you off the hook.

- It doesn’t always take population demographics into account One possible reason for the gender discrepancy above is height….BMI is actually weaker the further you fall outside the 5’5”-5’9” range. I would love to see the data from #2 actually rerun not by gender but by height, to see if the discrepancy holds. In terms of health predictions though, BMI cutoffs show variability by race. For example, a white person with a BMI of 30 carries the same diabetes risk as a South Asian with a BMI of 22 or a Chinese person with a BMI of 24. That’s a huge difference, and is not always accounted for in worldwide obesity tables.

- Overall it’s a pretty well correlated with early mortality. So with all the inaccuracies, why do we use it? Well, this is why:

That graph is from this 2010 paper that looked at 1.46 million white adults in the US. The hazard ratio is for their all cause mortality at the ten year mark (median start age was 58). Particularly for the higher numbers, that’s a pretty big difference. To note: some other observational studies have had a slightly different shaped curve, especially at the lower end (25-30 BMI) that suggested an “obesity paradox”. More recent studies haven’t found this, and there’s some controversy about how to correctly interpret these studies. The short version is that correlation isn’t causation, and we don’t know if losing weight helps with these numbers.

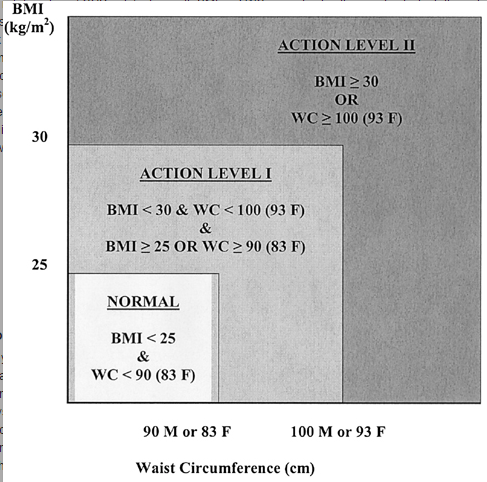

That graph is from this 2010 paper that looked at 1.46 million white adults in the US. The hazard ratio is for their all cause mortality at the ten year mark (median start age was 58). Particularly for the higher numbers, that’s a pretty big difference. To note: some other observational studies have had a slightly different shaped curve, especially at the lower end (25-30 BMI) that suggested an “obesity paradox”. More recent studies haven’t found this, and there’s some controversy about how to correctly interpret these studies. The short version is that correlation isn’t causation, and we don’t know if losing weight helps with these numbers. - For individuals on the borderline, you need another metric Back to individuals though….should you take your BMI seriously? Well maybe. It’s pretty clear if you’re getting a number over 30 you probably should. There’s always the “super muscled athlete” exception, but you pretty much would know if that were you. If you need another quick metric to assess disease risk though, it looks like using a combination of waist circumference and BMI may yield a better picture of health than BMI alone, especially for men. Here’s the suggested action range from that paper:

While waist circumference is obviously not something that most people know off the top of their head, it should be easy enough for doctors to take in an office visit.

While waist circumference is obviously not something that most people know off the top of their head, it should be easy enough for doctors to take in an office visit.

Overall, it’s important to remember that metrics like the BMI or waist circumference are really just screening tests and you get what you pay for. While we hope they catch most people who are at high risk, there will always be false positives and false negatives. While in population studies these may balance each other out, for any individual it’s important to take a look at all the various factors that go in to health. So, um, talk to your doctor and avoid over-interpretation.

The Tim Tebow Fallacy

I’ve blogged a lot about various cognitive biases and logical fallacies here over the years, but today I want to talk about one I just kinda made up: The Tim Tebow Fallacy. Yeah, that’s right, this guy:

I initially mentioned the premise in this post, but for those of you who missed it here’s the background: Tim Tebow is a Heisman trophy winning quarterback who played in the NFL from 2010-2012. Despite his short career, in 2011 he was all anyone could talk about. Everyone had an opinion about him and he was unbelievably polarizing, despite being a quite pleasant individual and a good-but-not-great player. It was all a little baffling, and writer Chuck Klosterman took a crack at explaining the issue here. In trying to work through the controversy, he made this observation:

On one pole, you have people who hate him because he’s too much of an in-your-face good person, which makes very little sense; at the other pole, you have people who love him because he succeeds at his job while being uniquely unskilled at its traditional requirements, which seems almost as weird. Equally bizarre is the way both groups perceive themselves as the oppressed minority who are fighting against dominant public opinion, although I suppose that has become the way most Americans go through life.

Ever since I read that, I’ve been watching political conversations and am stunned how often this type of thinking happens. It seems some people not only want to have a belief and defend it, but also get some sort of cache from having an unacknowledged or rare belief. It’s like a combination of a reverse-Bandwagon effect (where someone likes something more because it’s not popular) combined with type of majority illusion (where people inaccurately assess how many people actually hold a particular opinion). So as a fallacy, I’d say it’s when you find a belief more attractive and more correct because it runs counter to what you believe popular perception is. To put it more technically:

Tim Tebow Fallacy: The tendency to increase the strength of a belief based on an incorrect perception that your viewpoint is underrepresented in the public discourse

Need an example? Take a group conversation at a party: Person A mentions they like the Lord of the Rings movies. Person B pipes up that they actually really didn’t like them. Person C agrees with Person B, and the two bond a bit over finding out they share this unusual opinion. After a minute or two, Person A is getting a little frustrated and is now even a BIGGER fan of the movies. If it stopped there it wouldn’t be a Tebow fallacy, just regular old defensiveness. What kicks it over the edge is when Person A starts claiming “no one ever talks about how good the cinematography was in those movies!” or “no one really appreciates how innovative those were!”. or “No one ever gives geeky stuff any credit!”. They walk away irritated and believing that saying you like the Lord of the Rings movies has been sort of a subversive act, and that general defensiveness is called for.

Now of course this is all kind of poppycock. The Lord of the Rings movies are some of the most highly regarded movies of all time, and set records for critical acclaim and box office draw. The viewpoint Person A was defending is the dominant one in nearly every circle except for the one they happened to wander in to that night, yet they’re defensive and feel they need to continue to prove their point. It’s the Tim Tebow Fallacy.

Now I think there’s a couple reasons this sort of thing happens, and I suspect many of them are getting worse because of the internet:

- We’re terrible at figuring out how widespread opinions are. In my Lord of the Rings example, Person A extrapolated small group dynamics to the general population, likely without even realizing it. Now this is pretty understandable when it happens in person, but it gets really hard to sort through when you’re reading stuff online. Online you could read pages and pages of criticism of even the most well-loved stuff, and come away believing many more people think a certain way than they do. Even if 99.9% of American love something that still leave 325,000 who don’t. If those people have blogs or show up in comments sections, it can leave you with the impression that their opinions are more widely held than they are. And make no mistake, this influences us. It’s why Popular Science shut off their comments section.

- We feed off each other and headlines The internet being what it is, let’s imagine Person A goes home and vents their frustrations with Person B and C online. What started as an issue at one party no turns in to an anecdote that can be spread. The effect of this should not be underestimated. A few months ago someone sent me this story, about a writer with a large-ish Twitter following who had Tweeted a single picture of a lipstick name “Underage Red” with the caption “Just went shopping for some makeup. How is this a lipstick color?”. The whole story is here, but by the end of it her single Tweet had made it all the way to Time Magazine as proof of a “major controversy” and being cited as an example of “outrage culture”. She was inundated with people calling her out (including the lipstick creator, Kat Von D) for her opinion, all seemingly believing they were fighting the good fight against a dominate narrative. A narrative that was comprised of a single rather reasonable Tweet about a lipstick. I don’t blame those people by the way….I blame the media that creates an “outrage” story out of a single Tweet, then follows up with think pieces about “PC culture” and “oversensitivity”. The point is that in 2016, a single anecdote going viral is really common, but I’m not sure we’ve all adjusted our reactions to account for the whole “wait, how many people actually think this way?” piece. It’s even worse when you consider how often people lie about stuff. Throw in a few fabricated/exaggerated/one sided retellings, and suddenly you can have viral anecdotes that never even happened.

- It’s human nature to strive for optimal distinctiveness. While going with the crowd gets a lot of attention, I think it’s worth noting that humans actually are a little ambivalent about this. The theory of optimal distinctiveness argues that we actually are all simultaneously striving to differentiate ourselves just enough to be recognized while not going so far as to be ostracized. Basically we want to be in the middle of this graph:

From Brewer, M.B. (1991). “The social self: On being the same and different at the same time”. Personality and Social Psychology Bulletin, 17, 475-482.

By positioning our arguments against the dominant narrative, we can both defend something we really believe in AND differentiate ourselves from the group. I think that makes this type of fallacy uniquely attractive for many people.

- We’re selecting our own media sources, then judging them. One of my favorite humor websites of all times is Hyperbole and a Half by Allie Brosh. After going viral, Brosh put together an FAQ that includes this question/answer:

Question: I don’t think you’re funny and I’m frustrated that other people do Answer: It’s okay. Try not to be too upset about it. Humor is simply your brain being surprised by an unexpected variation in a pattern that it recognizes. If your brain doesn’t recognize the pattern or the pattern is already too familiar to your brain, you won’t find something humorous.

With the internet (and cable news, Facebook, Twitter, etc) we now have the ability to see hundreds or thousands of different opinions in a single week. What we often fail to recognize is that we actually select for most of these….who we follow or friend, webpages we visit etc. Everyone else does too. We are all constructing very individualized patterns of information intake, and it’s hard to know how usual or unusual our own pattern is. Instead of just “those who loved Lord of the Rings movies” and “those who didn’t” there’s also “those who hated it because they are huge book fans”, “those who don’t like any movie with magic in it”, “those who hated it because they hate Elijah Wood”, “those who prefer Meet the Feebles”, “those who didn’t get that whole ring thing”, “those who thought that was the one with the lion”, “those who liked it until they heard all the hype then wished everyone would calm down”, etc etc etc. Point is, we are often selecting for certain opinions, then reacting to the opinions we selected ourselves and taking it out on others who may legitimately never have encountered the opinion we’re talking about. If you combine this with point #3 above, you can see where we end up positioning ourselves against a narrative others may not be hearing.

- It’s a common mistake for great thinkers. Steve Jobs is widely considered one of the most innovative and intuitive businessmen of all time. His success largely came from his uncanny ability to identify gaps in the tech marketplace that were invisible to everyone else and then fill them better than anyone. What often gets lost in the quick blurbs about him though is how often he misfired. He initially thought Pixar should be a hardware company. He wiffed on multiple computer designs and had a whole confusing company called NeXT…..and he’s still considered one of the best in the world at this. Great thinkers are always trying to find what others are missing, and even the best screw this up pretty frequently. As with many fallacies, it’s important to remember that IQ points offer limited protection. What makes your mind great can also be your downfall.

- It gets us out of dealing with uncomfortable truths. One of the first brutal call outs I ever got on the internet was a glib and stupid comment I made about the Iraq War. It was back in 2004 or so, and I got in an argument with someone I respected over whether or not we should have gone in. I was against the war, and at some point in the debate I got mad and said that my issue was that George Bush “hadn’t allowed any debate”. I was immediately jumped on and it was pointed out to me in no uncertain terms that I was just flat out making that up. There was endless debate, I just didn’t like the outcome. That stung like hell, but it was true. Regardless of what anyone believes about the Iraq War then or now, we did debate it. It was easier for me to believe that my preferred viewpoint had been systematically squashed than that others had listened to it and not found it compelling. This works the other way too. I’m sure in 2004 I could find someone claiming vociferously that we’d over-debated the Iraq War, based mostly on the fact that they made up their mind early. Most recently I saw this happen with Pokemon Go, where the Facebook statuses talking about how great it was showed up about 30 minutes before the “I’m totally sick of this can we all stop talking about this” statuses showed up. I’m not saying there’s a “right” level of public discourse, but I am saying that it’s hard to judge a “wrong” level without being pretty arbitrary. We all want the game to end when our team’s ahead, but it really just doesn’t work that way.

So that’s my grand theory right there. As I hope point #6 showed, I don’t think I’m exempt from this. A huge amount of this blog and my real life political discussion are based on the premise of “not enough people get this important fact I’m about to share”. I get it. I’m guilty.

On the other hand, I think in the age of the internet when we can be exposed to so many different viewpoints, we should be careful about how we let the existence of those viewpoints influence our own feelings. If the forcefulness of our arguments is always indexed on what we think the forcefulness of our opposition is, that will leave us progressively more open to infection by the Toxoplasma of Rage. As the opportunities for creating our own “popular narrative” increase, we have to be even more careful that we reality check that at times. Check the numbers in opinion polls. Read media that opposes you. Make friends outside your demographic. Consider criticism. Don’t play for the Jets. You know, all the usual fallacy stuff.

Tebow Image Credit