Welcome to “From the Archives” where I revisit old posts to see where the science (or my thinking) has gone since I put them up originally.

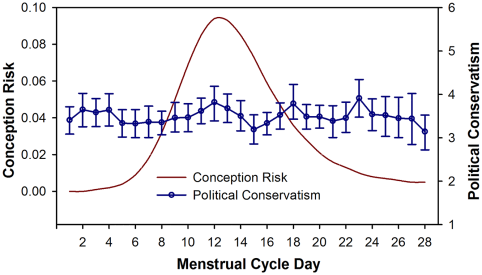

Back in good old October of 2012, it was an election year and I was getting irritated1. First, I was being bombarded with Elizabeth Warren vs Scott Brown for Senate ads, and then I was confronted with this study:The fluctuating female vote: politics, religion, and the ovulatory cycle (Durante, et al), which purported to show that women’s political and religious beliefs varied wildly around their monthly cycle, but in different ways if they were married or single. For single women they claimed that being fertile caused them to get more liberal and less religious, because they had more liberal attitudes toward sex. For married women, being fertile made them more conservative and religious so they could compensate for their urge to cheat. The swing was wide too: about 20%. Of note, the study never actually observed any women changing their vote, but compared two groups of women to find the differences. The study got a lot of attention because CNN initially put it up, then took it back down when people complained. I wrote two posts about this, one irritated and ranty, and one pointing to some more technical issues I had.

With a new election coming around, I was thinking about this paper and wanted to take a look at where it had gone since then. I knew that Andrew Gelman had ultimately taken shots at the study for reporting an implausibly large effect2 and potentially collecting lots of data/comparisons and only publishing some of them, so I was curious how this study had subsequently fared.

Well, there are updates! First, in 2014, a different group tried to replicate their results in a paper called Women Can Keep the Vote: No Evidence That Hormonal Changes During the Menstrual Cycle Impact Political and Religious Beliefs by Harris and Mickes. This paper recruited a different group, but essentially recreated much of the analysis of the original paper with one major addition. They conducted their survey prior to the 2012 election AND after, to see predicted voting behavior vs actual voting behavior. A few findings:

- The first paper (Durante et al) had found that fiscal policy beliefs didn’t change for women, but social policy beliefs did change around ovulation. The second paper (Harris and Mickes) failed to replicate this finding, and also failed to detect any change in religious beliefs.

- In the second paper, married women had a different stated preference for Obama (high when low feritility, lower when high fertility), but that difference went away when you looked at how they actually voted. For single women, it was actually the opposite. They reported the same preference level for Obama regardless of fertility, but voted differently based on the time of the month.

- The original Durante study had taken some heat for how they assessed fertility level in their work. There were concerns that self reported fertility level was so likely to be inaccurate that it would render any conclusions void. I was interested to see that Harris and Mickes clarified that the Durante paper actually didn’t accurately describe how they did fertility assessments in the original paper, and that they had both ultimately used the same method. This was supposed to be in the supplementary material, but I couldn’t find a copy of that free online. It’s an interesting footnote.

- A reviewer asked them to combine the pre and post election data to see if they could find a fertility/relationship interaction effect. When pre and post election data were kept separate, there was no effect. When they were combined, there was.

Point #4 is where things got a little interesting. The authors of the Harris and Mickes study said combining their data was not valid, but Durante et al hit back and said “why not?”. There’s an interesting piece of stat/research geekery about the dispute here, but the TL;DR version is that this could be considered a partial replication or a failure to replicate, depending on your statistical strategy. Unfortunately this is one of those areas where you can get some legitimate concern that a person’s judgement calls are being shaded by their view of the outcome. Since we don’t know what either researchers original plan was, we don’t know if either one modified their strategy based on results. Additionally the “is it valid to combine these data sets” question is a good one, and would be open for discussion even if we were discussing something totally innocuous. The political nature of the discussion intensifies the debate, but it didn’t create it.

Fast forward now to 2015, when yet another study was published: Menstrual Cycle Phase Does Not Predict Political Conservatism. This study was done using data ALSO from the 2012 election cycle3, but with a few further changes. The highlights:

- This study, by Scott and Pound, addressed some of the “how do you measure fertility when you can’t test” concerns by asking about medical conditions that might influence fertility to screen out women whose self reporting might be less accurate. They also ranked fertility on a continuum as opposed to the dichotomous “high” and “low”. This should have made their assessment more accurate.

- The other two studies both asked for voting in terms of Romney vs Obama. Scott and Pound were concerned that this might capture a personal preference change that was more about Obama and Romney as people rather than a political change. They measured both self-reported political leanings and a “moral foundations” test and came up with an overall “conservatism” rank, then tracked that with chances of conception.

- They controlled for age, number of children, and other sociological factors.

So overall, what did this show? Well, basically, political philosophy doesn’t vary much no matter where a woman is in her cycle.

The authors have a pretty interesting discussion at the end about the problems with Mechanical Turk (where all three studies recruited their participants in the same few months), the differences of measuring person preference (Obama vs Romney) vs political preference (Republican vs Democrat), and some statistical analysis problems.

So what do I think now?

First off, I’ve realized that getting all ranty when someone brings up women’s hormones effecting things may be counterproductive. Lesson learned.

More seriously though, I find the hypothesis that our preferences for individuals may change with hormonal changes more compelling than the hypothesis that our overall philosophy of religion or government changes with our hormones. The first simply seems more plausible to me. In a tight presidential election though, this may be hopelessly confounded by the candidates actual behavior. It’s pretty well known that single women voted overwhelmingly for Obama, and that Romney had a better chance to capture the votes of married women. Candidates know this and can play to it, so if a candidate makes a statement playing to their base, you may see shifts that have nothing to do with hormones of the voters but are an actual reaction to real time statements. This may be a case where research in to the hypothetical (i.e. made up candidate A vs B) may be helpful.

The discussions on fertility measures and statistical analysis were interesting and a good insight in to how much study conclusions can change based on how we define particular metrics. I was happy to see that both follow up papers hammered on clear and standard definitions for “fertility”. If that is one of the primary metrics you are assessing, then the utmost care must be taken to assess it accurately, or else the signal to noise ratio can go through the roof.

Do I still think CNN should have taken the story down? Yes….but just as much as I believe that they should take most sensational new social/psych research stories down. If you follow the research for just two more papers, you see the conclusion go from broad (women change their social, political and religious views and votes based on fertility!) to much narrower (women may in some cases change their preference or voting patterns for particular candidates based on fertility, but their religious and political beliefs do not appear to change regardless). I’ll be interested to see if anyone tries to replicate this with the 2016 election, and if so what the conclusions are.

This concludes your trip down memory lane!

1. Gee, this is sounding familiar↩

2. This point was really interesting. He pointed out that around elections, pollsters are pretty obsessive about tracking things, and short of a major scandal breaking literally NOTHING causes a rapid 20 point swing. The idea that swings that large were happening regularly and everyone had missed it seemed implausible to him. Statistically of course, the authors were only testing that there was a difference at all, not what it was….but the large effect should possibly have given them pause. It would be like finding that ovulation made women spend twice as much on buying a house. People don’t change THAT dramatically, and if you find that they do you may want to rerun the numbers.↩

3. Okay, so I can’t be the only one noticing at this point that this means 3 different studies all recruited around 1000 American women not on birth control, not pregnant, not recently pregnant or breastfeeding but of child bearing age, interested in participating in a study on politics, all at the same time and all through Amazon’s Mechanical Turk. Has anyone asked the authors to compare how much of their sample was actually the same women? Does Mechanical Turk have any barriers for this? Do we care? Oh! Yes, turns out this is actually a bit of a problem.↩